// For brevity, we show only the relevant part of the typical GameViewMain unit.

// We assume you created in the editor,

// and put in the published section of your view, this component:

// ButtonExitGame: TCastleButton;

uses CastleApplicationProperties;

procedure TViewMain.Start;

begin

inherited;

ButtonExitGame.Exists := ApplicationProperties.TouchDevice;

end;Touch Input

1. Introduction

Some devices (Android, iOS, Nintendo Switch) allow to use touch screen where user literally touches the screen to indicate clicking / dragging.

This page discusses various aspects of handling touch screen input in your applications. The general principle is that you can handle touch screen input in the same way as mouse input. This is because:

-

Touching screen is reported as pressing the left mouse button. So all mouse-handling code will work for touch screen too.

-

Events of built-in controls naturally work with touch screen out-of-the-box. For example, a button will react to touch on mobile devices, generating

TCastleButton.OnClickevent.

|

Note

|

Desktop devices actually can have a touch screen too. Steam Deck allows to use a touch screen on a platform that is technically just Linux. Though right now we don’t have any special support for it, we just listen on mouse events from OS. |

2. Handling events

2.1. Simple: Handle touch input by handling mouse (left) button clicks

The touches on the touch screen are reported exactly like using the left mouse button on desktops. This is exactly what you want in general to have cross-platform support for both mouse and touch.

So to write a cross-platform application that responds to both touches and mouse, just react to:

-

TCastleUserInterface.Presswith mouse buttonbuttonLeft. For example override the view methodPress. -

TCastleUserInterface.Releasewith mouse buttonbuttonLeft. You can override the view methodRelease. -

TCastleUserInterface.MotionwhenbuttonLeftis inTInputMotion.Pressed. You can override the view methodMotion.

2.2. Multi-touch support

If you want to additionally handle multi- touch on touch devices, you can also look at

-

TInputPressRelease.FingerIndexat eachPress/Releaseevent withbuttonLeft. Note that when using actual mouse on desktops, we always reportFingerIndex = 0. -

To know currently pressed fingers, look at

TCastleContainer.Touches.

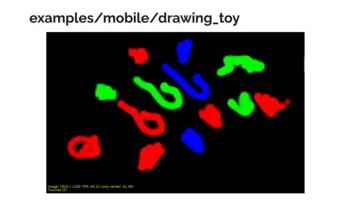

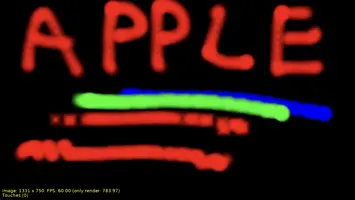

See example in examples/mobile/drawing_toy for a demo using multi-touch. Screens below are from Android and iOS.

2.3. Remember what is not possible with touch input

Remember that some things are not possible with a touch screen. Namely:

-

You will never have mouse press with button different than

buttonLeft -

You will never observe motion when no mouse button is pressed, of course. Since we can only observe dragging when user is touching the screen.

3. Detecting devices with touch screen

If you want to conditionally behave differently on devices with touch screen, you can always check ApplicationProperties.TouchDevice.

While you should avoid relying on it (it is better to just write code that makes sense for both touch and mouse), sometimes you may want to do something special for touch devices. For example, you may want to display buttons to do some actions on touch devices, where on desktops the same action can be performed by a keyboard shortcut. For example, a button to "Exit Game" on touch devices, where on desktops you can just press Escape key. Like this:

To test how such code behaves on desktop, you can (all the methods below do the same thing):

-

Run the application using editor selecting first "Run → Run Parameters → Pretend Touch Device" from the editor menu.

-

Run the application from command-line adding the

--pretend-touch-deviceoption. -

Or just manually set

ApplicationProperties.TouchDeviceproperty totrue.

For example, this allows to test how does the "Exit Game" button, mentioned above as an example, looks and works on a desktop.

|

Note

|

Some devices with touch screen, like Android and Nintendo Switch, allow to plug external keyboard and mouse. We don’t support it now in any special way, but we may in the future, and then user will be able to use normal mouse behavior in your mobile game. It also means that inferring too much from ApplicationProperties.TouchDevice being true isn’t wise — the device may have a touch screen, but user may be using external keyboard / mouse.

|

4. Detecting platforms using conditional compilation

|

Note

|

We do not recommend to detect touch devices by looking at compilation symbols. As described above, we recommend to use ApplicationProperties.TouchDevice if you need conditional behavior based on touch devices.

|

If you really need to detect the platform at compile-time, you can use the following compilation symbols:

-

ANDROIDis defined on Android platform. -

CASTLE_IOSis defined on iOS platform (including iOS Simulator; FPC >= 3.2.2 also defines justIOS, but not for iOS Simulator). -

CASTLE_NINTENDO_SWITCHis defined on Nintendo Switch.

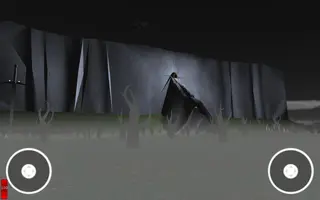

5. Navigating in 3D on touch devices using TCastleWalkNavigation

Our built-in navigation components TCastleExamineNavigation and TCastleWalkNavigation work on mobile nicely. They allow to perform typical navigation actions using the touch screen. In particular, TCastleExamineNavigation supports intuitive pinch and pan gestures using 2 fingers.

However, the experience of walking using only TCastleWalkNavigation is not optimal for many games. While you can move and rotate by dragging (see TCastleWalkNavigation.MouseDragMode) it is hard to do it precisely, which may be a problem in e.g. shooting games.

For this reason, we recommend to add TCastleTouchNavigation to your viewport to allow for easy 3D navigation on mobile. The add TCastleTouchNavigation displays visual UI in the viewport corners where user can touch to move around and rotate the camera. It cooperates with TCastleWalkNavigation and TCastleExamineNavigation to provide a seamless experience on all platforms.

You can add it in the editor and at runtime just configure whether it exists, like this:

// For brevity, we show only the relevant part of the typical GameViewMain unit.

// We assume you created in the editor,

// and put in the published section of your view, this component:

// MyTouchNavigation: TCastleTouchNavigation;

uses CastleApplicationProperties;

procedure TViewMain.Start;

begin

inherited;

MyTouchNavigation.Exists := ApplicationProperties.TouchDevice;

end;You could also create it at runtime, like this:

// For brevity, we show only the relevant part of the typical GameViewMain unit.

// We assume you created in the editor,

// and put in the published section of your view, this component:

// MyViewport: TCastleViewport;

uses CastleApplicationProperties;

procedure TViewMain.Start;

var

TouchNavigation: TCastleTouchNavigation;

begin

inherited;

TouchNavigation := TCastleTouchNavigation.Create(FreeAtStop);

TouchNavigation.FullSize := true;

TouchNavigation.Viewport := MyViewport;

TouchNavigation.AutoTouchInterface := true;

MyViewport.InsertFront(TouchNavigation);

end;You can adjust what navigation controls are shown by TouchNavigation:

-

It is easiest to set

TouchNavigation.AutoTouchInterfacetotrueon platforms where you want to use it (like mobile).You don’t need to do anything more then, but optionally you can customize

TouchNavigation.AutoWalkTouchInterfaceandTouchNavigation.AutoExamineTouchInterface. -

Alternatively, you can set

TouchNavigation.AutoTouchInterfacetofalseand manually adjust theTouchNavigation.TouchInterfaceto the best navigation approach at any given moment.

|

Note

|

These two components nicely work in tandem if they both exist: When you use |

6. On-screen keyboard

Since mobile devices do not (usually) have a physical keyboard connected, we can show an on-screen keyboard when you focus an edit control.

This is supported on Android.

To make the TCastleEdit receive focus and open on-screen keyboard correctly in your applications:

-

Use

<service name="keyboard" />in theCastleEngineManifest.xml. -

Set

AutoOnScreenKeyboardtotrueon theTCastleEditinstance (you can set it from the editor, or from code). -

If you want to focus the edit before user touches it, do it by

Edit1.Focused := true

See the examples/mobile/on_screen_keyboard for a simplest working example.

|

Warning

|

On-screen keyboard is not supported on iOS yet. Subscribe to issue 554 on GitHub to get notified when it is implemented. Feel free to talk about this on Discord or forum if you need it sooner, your feedback really affects the priorities when things are implemented, so speak up :) Donations toward this goal are also welcome! |

To improve this documentation just edit this page and create a pull request to cge-www repository.